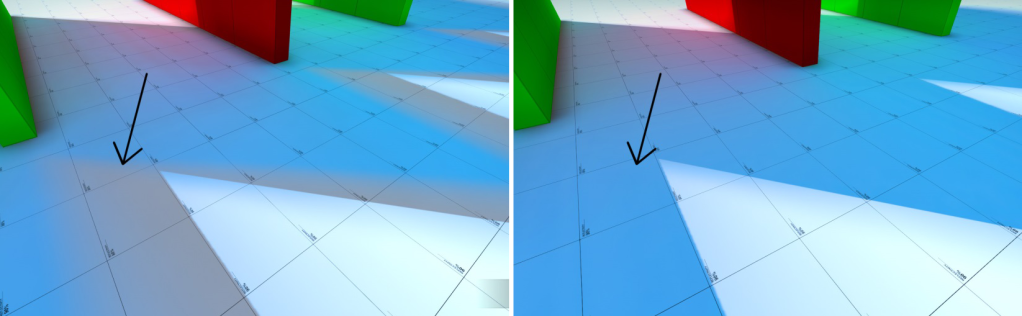

CSM stands for Cascaded Shadow Maps, a popular and most commonly used technique to render Sun Shadows or Shadows from any big Directional ( can also be used for other light types ) lights covering a big area of a virtual scene. For more details, check out my blog posts on Shadows and CSM.

We shipped the first version of CSM in content update 4 back in 2016, and you can check all the details in this blog post – CSM in source engine. This blog post will only discuss improvements and changes made since then.

We had to tackle two critical tasks to lay the foundation for the XenEngine upgrade. The first challenge was upgrading the engine from ShaderModel 2.0 to Shader Model 3.0. The second task involved setting up a reliable Gbuffer with precise depth values and accurate world position reconstruction. This step was crucial not only for enhancing CSM but also for other aspects of the XenEngine.

VRAD is the light mapper or light baking tool in the source engine. We also integrated the valve’s CSGO CSM update to blackmesa for all the improvements from baked shadows. More details about this update can be found on CSGO Lighting and Shader Improvements ,

Well, this series is long overdue. I was about to post some of the latest progress from my dx12 hobby engine when I realized I had never finished/published these blog posts based on my work on BlackMesa. Better late than never, I will release all these posts before I post anything about my new engine.

A gentle reminder: Blackmesa is based on DX9 and older versions of C++ etc., and those ancient development tools are not supported anymore and are very unstable, especially on Windows 10.

It took me a while to set up all the development tools again on a separate OS / SSD. Finding the right combination of Nsight & Gfx driver versions that would work for at least 10 mins before crashing was time-consuming. I wanted to do complete frame graph breakdowns from Nsight on multiple scenes with numbers, but it would be very hard/time-consuming to do it all.

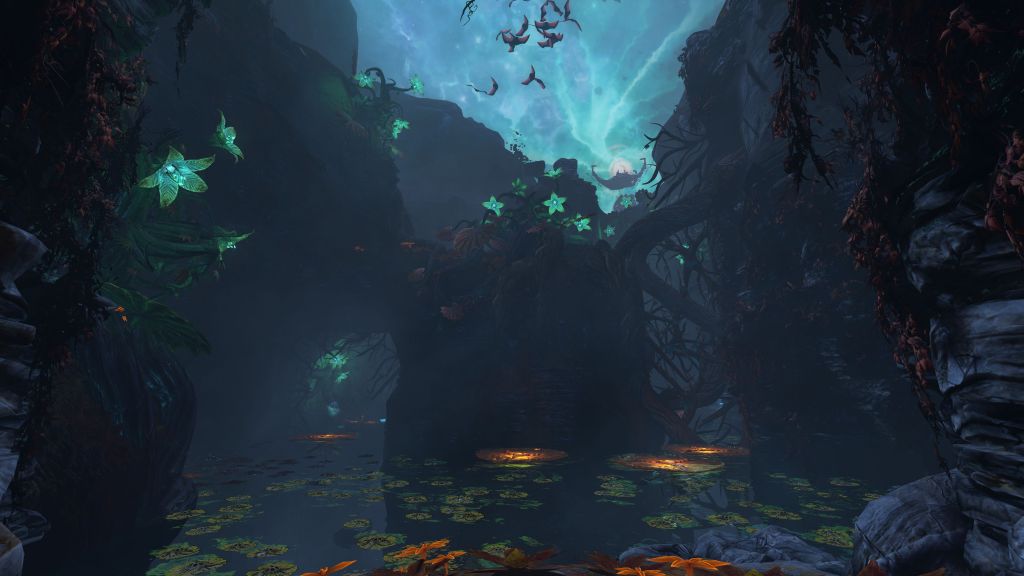

For the past 6+ yrs, I have been working with an incredible team at Crowbar Collective on BlackMesa. It is a remake of one of the best games – Half-Life, released in 1998. I got into gaming because of Doom, HalfLife, and StreetFighter. So I was excited when I got the opportunity to work on BlackMesa.a.

Started next iteration of JustAnotherGameEngine – JAGE5 from scratch. Working with DirectX12 for now and if it ever reaches a good feature-rich point I might look into Vulkan and other platforms. Embracing new cool features of C++ 14/17/20 as well. I did not get the chance to experiment with the new C++ feature set yet. This time another objective is to go multi-threaded as soon as the renderer reaches a certain point – like it should be able to render scenes from fbx, etc, with basic lighting & other common features.

Embracing 3rd party open source libraries and middle-ware a bit more this time to get the new engine up and running as soon as possible. Still going to try to maintain a balance because I want to make a game engine not assemble it and the plan is to code everything on the rendering side myself.

Check out JAGE5 page for more details and also to check out its current progress.

I have been working on Source Engine from past few months with a wonderful team at Crowbar Collective on a project called BlackMesa. It is a remake of one of the best games of all time – Half-Life which was originally released in 1988. One of the things I have been working on from past couple of months is cascaded shadow maps.

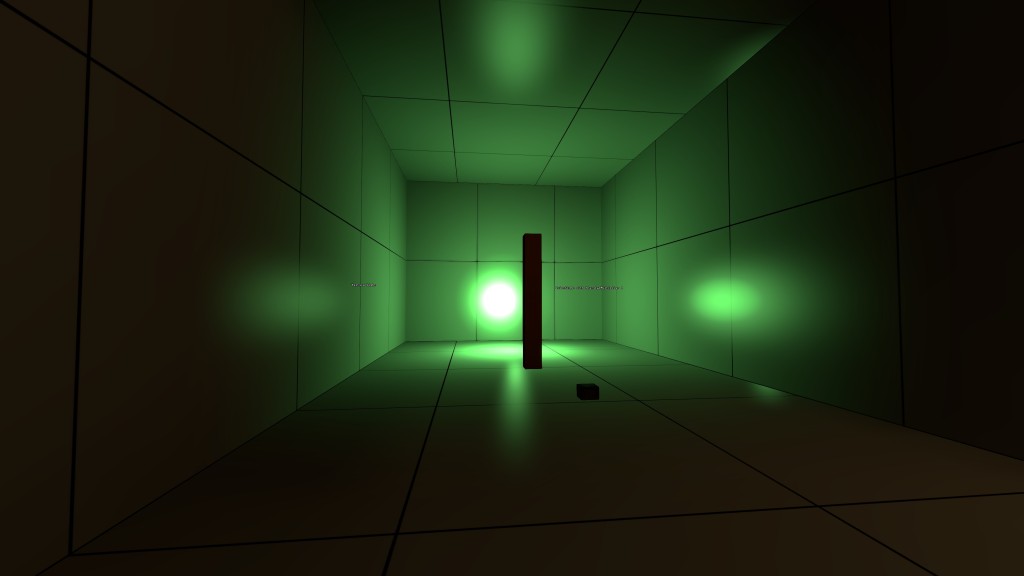

Shadows are one of the most important aspects of making a virtual scene look realistic and making games more immersive. They provide key details of object placements in the virtual world and can be pretty crucial to gameplay as well.

SourceEngine (at least the version of the engine we have) doesn’t have a high-quality shadows system that works flawlessly on both static and dynamic objects. Even the static shadows from the VRAD aren’t that great unless we use some crazy high resolutions for light maps which greatly increases both the compile times and the map size. And shadows on models just doesn’t work properly since they are all vertex lit. So we needed some sort of dynamic and a very high-quality shadow system, which is something very common nowadays in a real-time rendering application or game.

One of the most popular ways of implementing shadows is through shadow mapping algorithm. CSM or Cascade Shadow Maps is the further extension of the algorithm to generate high-quality shadows avoiding aliasing artifacts and other limitations of vanilla shadow mapping. For more details check this.

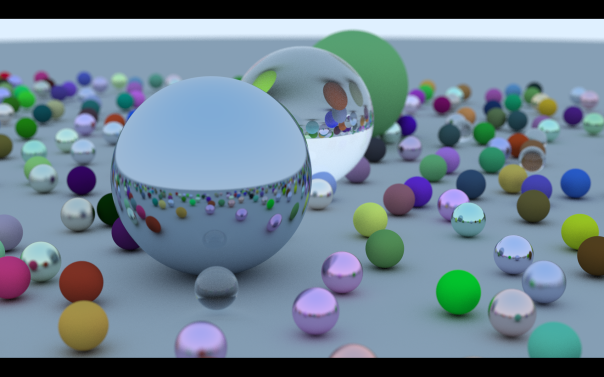

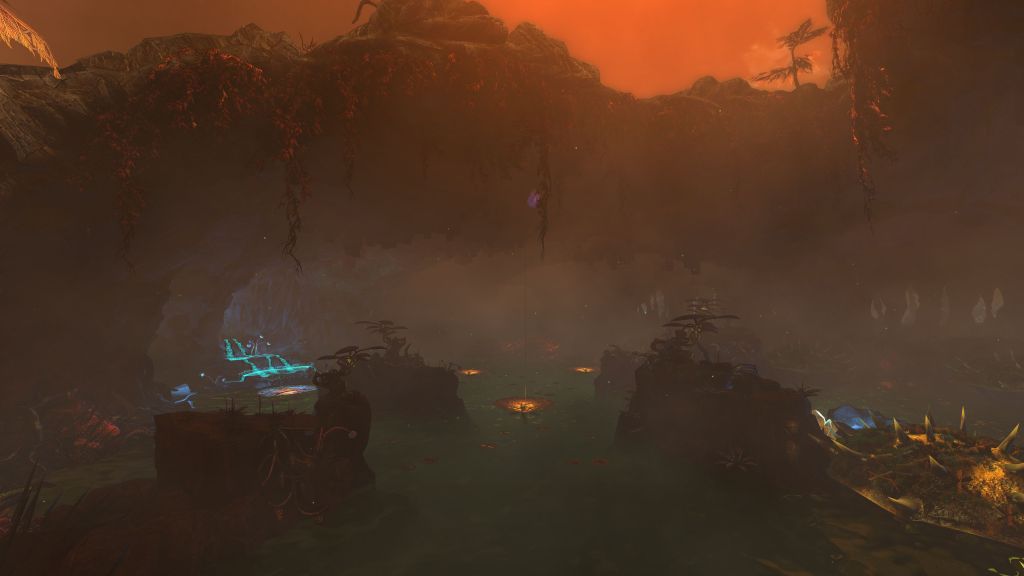

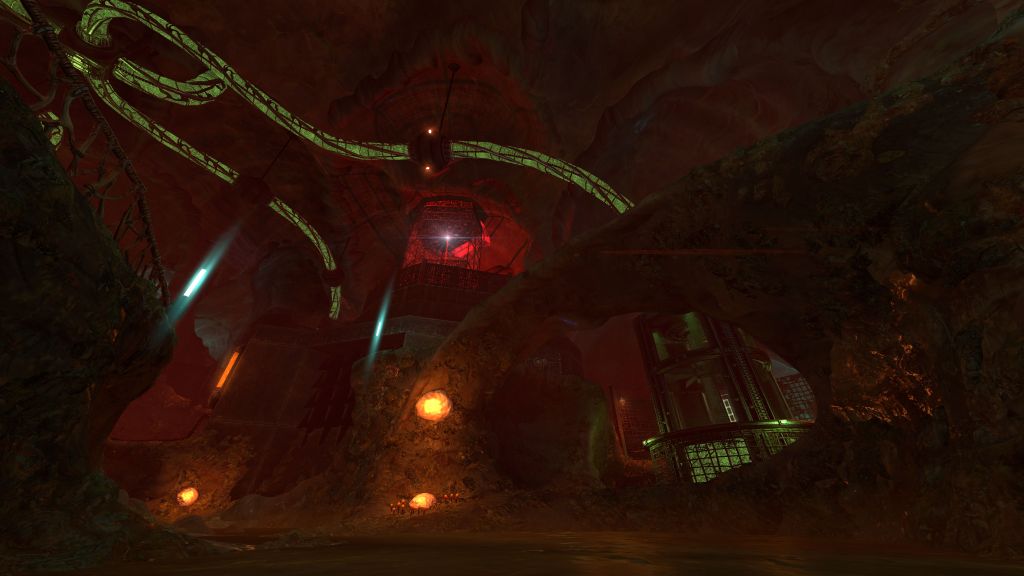

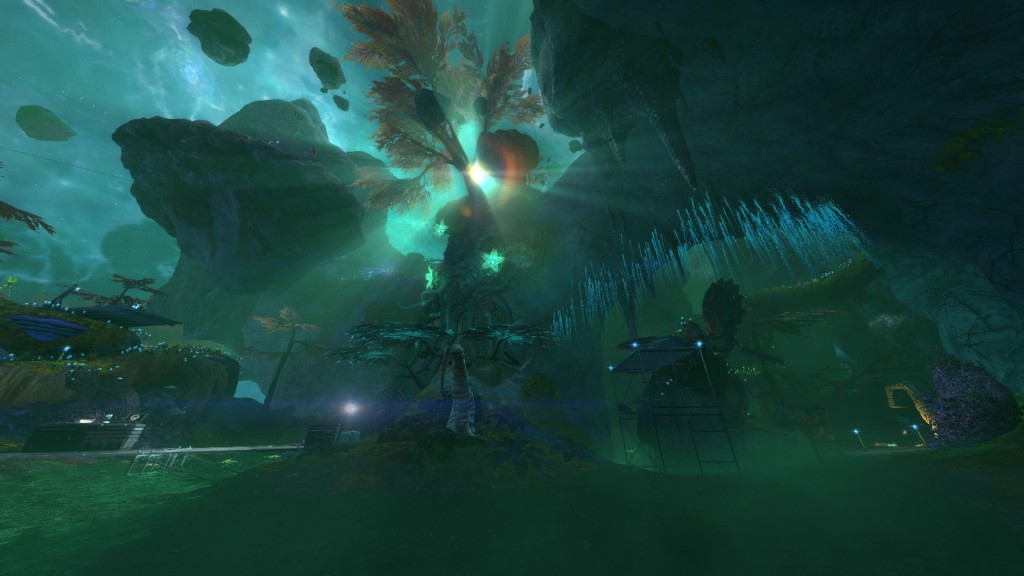

Here’s a screenshot of one of the levels from the upcoming content update –